Spoken English is a critical language skill and essential for practical communication ability. However, most learning apps on the market still underperform in this area and fail to solve the core user pain point: How do we improve spoken English effectively?

Analysis of Existing Approaches

Training Institutions

Traditional spoken-English training usually follows this process:

- Heavy listening input: consume listening materials to build language input.

- Imitation practice: shadowing/repeating to train pronunciation and intonation.

- Free expression: topic-based speaking to improve flexible language usage.

This works, but still has weaknesses:

- Listening materials are often not level-matched, reducing learning efficiency.

- Imitation practice is often unsystematic and weak at targeted gap-filling.

- Free-expression sessions often lack realistic simulation and real-time feedback, making it hard to notice and correct mistakes.

AI Speaking Apps

Most speaking apps currently provide:

- Teaching mode: users choose a lesson (for example, self-introduction) as preparation.

- Free dialogue: users choose a scenario and complete AI-driven speaking tasks.

- Performance report: after a lesson/dialogue, AI generates pronunciation/grammar scores.

Despite rich features, common problems remain:

- Weak knowledge-system design: many apps provide only simple dialogues without systematic grammar/vocabulary/sentence-pattern instruction.

- Monotonous practice content: scenario coverage is limited, often repeating similar sentence patterns.

- Shallow feedback: reports mostly score pronunciation/grammar, with limited analysis of logic, wording, and communicative effectiveness.

- Insufficient corrective guidance: errors are marked but targeted correction paths are rarely provided.

- Limited personalization: many products use one-size-fits-all paths rather than adapting to level and goals.

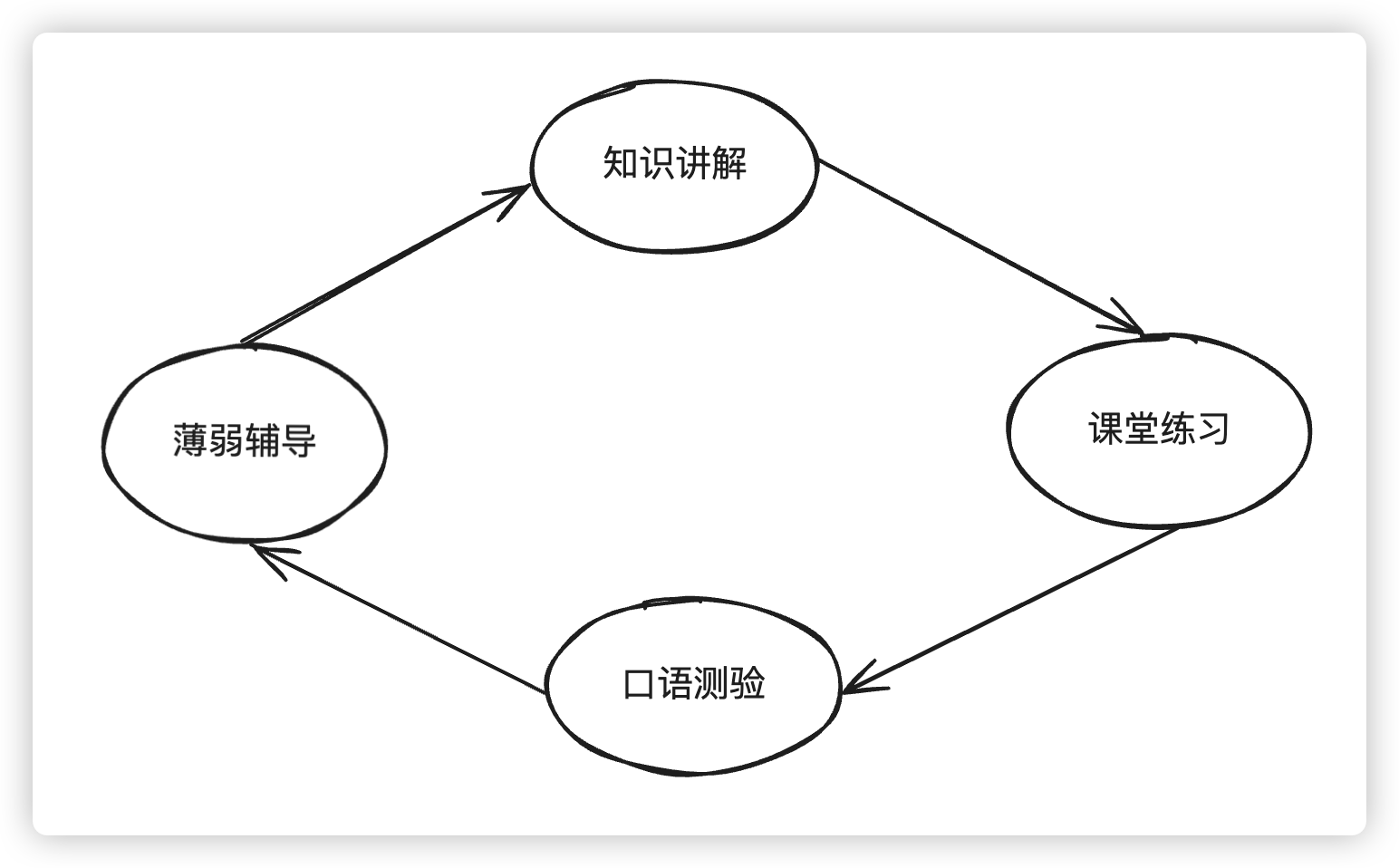

Optimized Solution: A Closed-Loop Spoken English Learning Path

Based on methods used by training institutions and observations from domestic and international speaking products, we designed a closed-loop path to improve spoken English systematically.

Learning Flow

- Knowledge Instruction: systematically teach key grammar, vocabulary, and sentence patterns at a level slightly above the learner’s current ability.

- In-class Practice: convert taught knowledge and examples into Q&A exercises for targeted reinforcement (interleaved with instruction for better efficiency).

- Speaking Test: AI generates scenarios (for example, buying coffee) and guides users to actively use learned knowledge to complete specific tasks.

- Weakness Coaching: AI analyzes test performance, diagnoses weak areas, and delivers targeted reinforcement modules to close the loop.

Theoretical Foundation

Knowledge Instruction

This part is grounded in Stephen Krashen’s Input hypothesis:

If language models/teachers provide enough comprehensible input, structures that learners are ready to acquire will appear in that input. Krashen argues this can improve grammatical accuracy better than direct grammar instruction alone.

A simple real-world example: when people want to express traffic congestion, many say “There were many cars,” because they were never sufficiently exposed to expressions like “heavy traffic,” “traffic jam,” or “traffic congestion.”

Knowledge instruction is fundamentally an input process. By continuously providing slightly challenging but understandable content, learners stay engaged while accumulating enough input for subsequent output stages (in-class practice and speaking tests).

Speaking Test

This part is grounded in Merrill Swain’s Output hypothesis:

Output hypothesis proposes that language acquisition/learning may occur through language production (spoken or written), because learners are more likely to notice gaps in their knowledge while producing output and learn while trying to fill those gaps.

In real conversations, people often pre-plan a sentence (for example, “Can I have a latte?”) but when speaking, they produce something incomplete like “This… Thank you.” Only at actual output time do they notice the gap.

AI speaking tests simulate realistic situations (shopping, ordering food, etc.), forcing active expression in context. Using taught content as explicit test goals both evaluates mastery and strengthens retention.

Weakness Coaching

This part is grounded in Biggs’ Reflective Learning:

Reflective learning involves reviewing prior experiences and critically analyzing events. By examining both successful and unsuccessful aspects, learners convert surface learning into deeper learning while identifying gaps for improvement.

Existing AI speaking apps can generate reports and point out issues, but most stop at result presentation and do not effectively guide reflection and remediation.

That is why weakness coaching is the key node in our closed loop. AI analyzes conversation logs from speaking tests, diagnoses mastery of current learning targets, then provides focused drills that guide reflection and correction.

Example: while learning “be verbs,” if a learner uses wrong tense in dialogue, the system can immediately recommend a micro-lesson such as “present continuous (be doing)” plus targeted exercises.

Advantages

Compared with traditional training and current apps, this closed-loop approach offers:

- Systematic structure: fuller knowledge instruction with level-appropriate difficulty.

- Targeted practice: diversified practice/testing to expose and fix errors quickly.

- Real-world readiness: AI scenarios with real-time feedback improve communicative competence.

- Personalization: adaptive path and recommendations based on user level and goals.

- True loop closure: weakness coaching continuously reinforces weak points and drives iterative improvement.